R²D²: Boost Robot Training with World Foundation Models and NVIDIA Research Workflows

Sources: https://developer.nvidia.com/blog/r2d2-boost-robot-training-with-world-foundation-models-and-workflows-from-nvidia-research, developer.nvidia.com

TL;DR

- World Foundation Models (WFMs) advance data generation for physical AI by simulating, predicting, and reasoning about future world states.

- NVIDIA Cosmos provides WFMs for robotics and autonomous vehicles, with three post-trainable model types: Cosmos Predict, Cosmos Transfer, and Cosmos Reason.

- Cosmos Predict generates future world states as videos from image, video, and text prompts and supports post-training variants like Single2MultiView for multi-view consistency.

- Cosmos Transfer enables photoreal style transfers and scene generation from multiple control inputs and text prompts to augment synthetic datasets and improve sim-to-real transfer.

- Cosmos Reason is a reasoning vision-language model (VLM) that can curate and annotate data and be post-trained as a robot vision-language-action (VLA) model, trained with supervised fine-tuning (SFT) and reinforcement learning (RL).

- The edition highlights SDG workflows and data curation for physical AI, with examples like post-training on the robovqa task and references to workflows discussed in NVIDIA Research.

Context and background

As physical AI systems scale, the demand for richly labeled datasets grows beyond what is feasible to capture manually in the real world. World Foundation Models (WFMs) are designed to simulate, predict, and reason about future world states based on real-world dynamics. NVIDIA Cosmos is a platform for WFM development tailored to physical AI applications such as robotics and autonomous vehicles. Cosmos WFMs come in three post-trainable types—Cosmos Predict, Cosmos Transfer, and Cosmos Reason—which can be adapted for specific tasks and deployed to train physical AI and industrial vision AI for spatial awareness, motion planning, and complex task execution. This NVIDIA Robotics Research and Development Digest (R²D²) details Cosmos WFMs and the workflows from NVIDIA Research, focusing on synthetic data generation (SDG) and data curation for physical AI.

What’s new

Cosmos Predict models can be post-trained for physical AI applications, enabling the generation of coherent and physically plausible future frames from text, images, or videos. A notable post-training example is Single2MultiView, which produces synchronized, multi-view camera footage from a single front-view autonomous driving video to support AV development. Other examples include a sample command for video relighting, illustrating how novel lighting can be applied to frames produced by an inverse renderer. Cosmos Transfer models generate world simulations based on diverse control inputs such as segmentation maps, depth, edge maps, lidar scans, keypoints, and HD maps. These modalities enable users to steer scene composition and create diverse visual features via text prompts, with workflows illustrated for generating RGB video from text prompts and HD Map conditions. Cosmos Reason is a world foundation model focused on reasoning for physical AI. It understands physical common sense and can make embodied decisions through long chain-of-thought reasoning. Cosmos Reason serves as a data-critic during synthetic data generation (SDG) and can be post-trained to function as a robot vision-language-action (VLA) model. The Reason model is trained in two stages—supervised fine-tuning (SFT) and reinforcement learning (RL). SFT can improve performance on specific tasks, for example by training with the robovqa dataset to enhance robotics visual question answering use-cases. This edition of R²D² highlights workflows that leverage Cosmos WFMs for synthetic data generation and data curation, underscoring their role in accelerating post-training AI and improving real-world performance. NVIDIA also points to resources and events for deeper engagement, including opportunities at SIGGRAPH 2025.

Why it matters (impact for developers/enterprises)

The described Cosmos WFMs address a central bottleneck in physical AI development: the cost and time required to create richly labeled, diverse datasets that support robust perception, planning, and control. By enabling post-training adaptations of Cosmos Predict, Transfer, and Reason, developers can tailor synthetic data generation to specific hardware, tasks, and environments without starting from scratch. This capability supports faster iteration, improved sim-to-real transfer, and enhanced data curation through reasoning-based data annotation and filtering. Enterprises building robotics systems or industrial vision AI can leverage these workflows to expand training datasets efficiently, reduce labeling burden, and improve model reliability in real-world tasks.

Technical details or Implementation

Cosmos Predict is designed to generate future world states as videos from inputs that include text, images, or videos. It supports post-training variants such as Single2MultiView, which creates multiple, consistent camera perspectives from a single front-view driving video, yielding synchronized footage for autonomous vehicle development. In addition, there are post-training examples like a video relighting capability, which applies novel lighting to frames produced by an inverse renderer to generate relit video frames. Cosmos Transfer uses multiple control modalities to steer world generation, including segmentation maps, depth, edge maps, lidar scans, keypoints, and HD maps. This combination enables users to guide scene composition and produce diverse visual features via text prompts, with workflows demonstrating how to generate RGB video from a text prompt and HD Map condition video. Cosmos Reason is a reasoning VLM focused on embodied decision-making for physical AI. It is trained in two stages: supervised fine-tuning (SFT) to improve task-specific performance and reinforcement learning (RL) to refine decision-making under real-world constraints. SFT training can be applied to improve performance on robotics visual question answering use-cases, with robovqa cited as an example dataset used to enhance capabilities in this domain. Cosmos Reason can serve as a data critic during synthetic data generation, helping curate higher-quality training data by understanding action sequences and real-world constraints. The article emphasizes that these WFMs are part of NVIDIA Research’s broader exploration into synthetic data generation (SDG) and data curation for physical AI, illustrating concrete workflows and use cases such as post-training on robotics and autonomous driving tasks.

Key takeaways

- World Foundation Models (WFMs) enable scalable synthetic data generation and data curation for physical AI applications.

- Cosmos Predict, Cosmos Transfer, and Cosmos Reason provide complementary capabilities for predicting future states, controllable scene generation, and reasoning-based data curation.

- Post-training enables adapting WFMs to specific robotics and autonomous vehicle tasks, reducing the need for new models from scratch.

- Examples like Single2MultiView and video relighting demonstrate practical SDG and data augmentation workflows for device- and task-specific needs.

- Cosmos Reason adds a reasoning-based critique that can guide data selection and improve robotics VQA and related tasks through SFT and RL training.

- The content underscores NVIDIA Research’s focus on practical workflows and invites engagement through events and educational resources, including the NVIDIA Robotics Digest series.

FAQ

-

What are Cosmos WFMs?

Cosmos WFMs are World Foundation Models from NVIDIA that can be post-trained for specific robotics and physical AI applications, including prediction, transfer, and reasoning tasks.

-

How do Cosmos Predict, Cosmos Transfer, and Cosmos Reason differ in purpose?

Cosmos Predict generates future world states as videos from prompts; Cosmos Transfer enables photoreal style transfers and scene generation from multiple control inputs; Cosmos Reason is a reasoning VLM that can curate and annotate data and be post-trained as a robot VLA model.

-

What is R²D² in this context?

R²D² is NVIDIA Robotics Research and Development Digest, which explores Cosmos WFMs and workflows from NVIDIA Research to accelerate synthetic data generation and data curation for physical AI.

-

How is Cosmos Reason trained?

Cosmos Reason is trained in two stages: supervised fine-tuning (SFT) to improve task-specific performance, and reinforcement learning (RL) to refine embodied decision-making.

-

How can developers use these workflows in practice?

Developers can leverage post-training capabilities to tailor synthetic data generation, augment SDG, and improve sim-to-real transfer for robotics and autonomous driving tasks, guided by data curation from Cosmos Reason.

References

More news

NVIDIA HGX B200 Reduces Embodied Carbon Emissions Intensity

NVIDIA HGX B200 lowers embodied carbon intensity by 24% vs. HGX H100, while delivering higher AI performance and energy efficiency. This article reviews the PCF-backed improvements, new hardware features, and implications for developers and enterprises.

Shadow Leak shows how ChatGPT agents can exfiltrate Gmail data via prompt injection

Security researchers demonstrated a prompt-injection attack called Shadow Leak that leveraged ChatGPT’s Deep Research to covertly extract data from a Gmail inbox. OpenAI patched the flaw; the case highlights risks of agentic AI.

Predict Extreme Weather in Minutes Without a Supercomputer: Huge Ensembles (HENS)

NVIDIA and Berkeley Lab unveil Huge Ensembles (HENS), an open-source AI tool that forecasts low-likelihood, high-impact weather events using 27,000 years of data, with ready-to-run options.

How to Reduce KV Cache Bottlenecks with NVIDIA Dynamo

NVIDIA Dynamo offloads KV Cache from GPU memory to cost-efficient storage, enabling longer context windows, higher concurrency, and lower inference costs for large-scale LLMs and generative AI workloads.

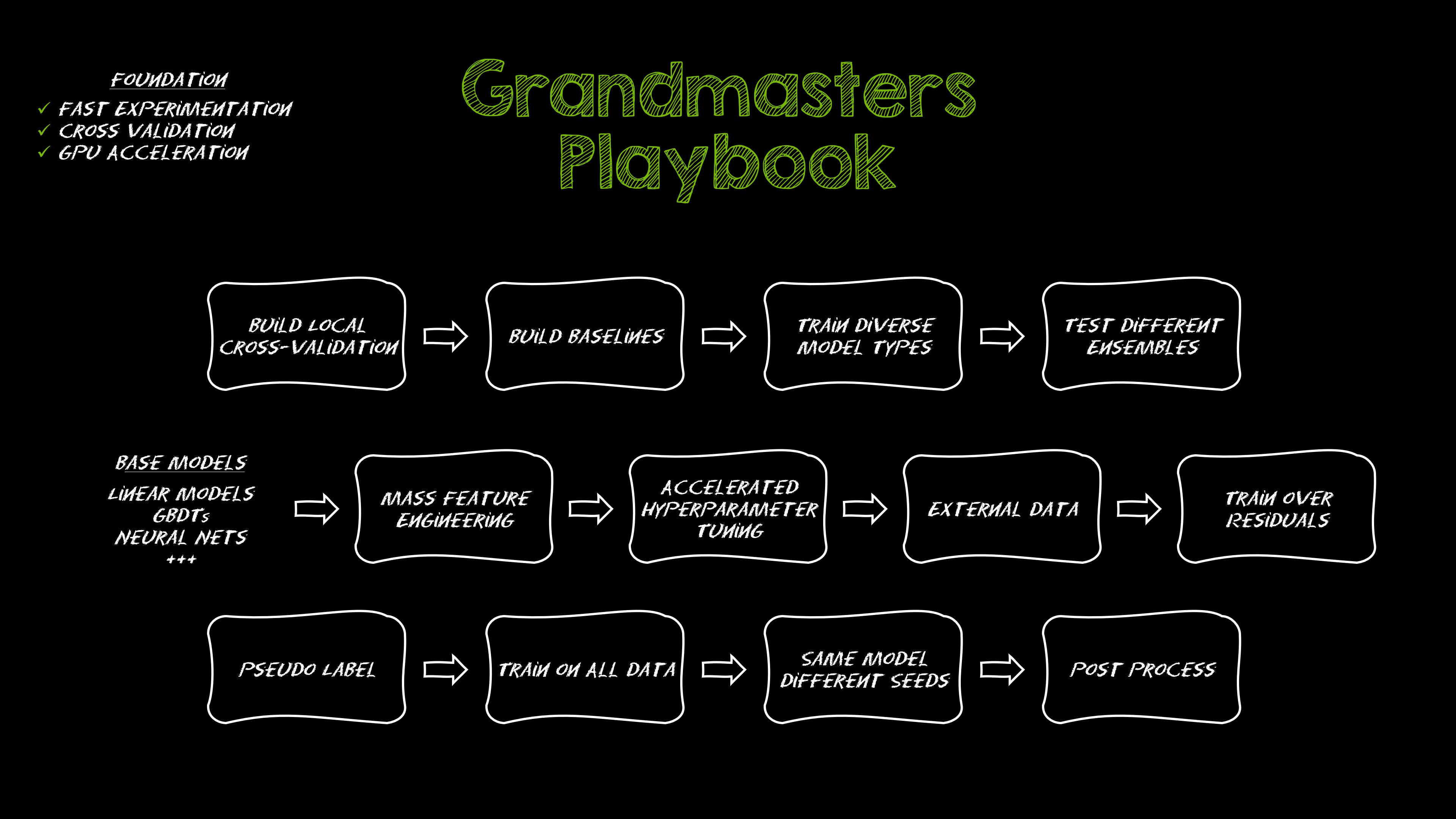

Kaggle Grandmasters Playbook: 7 Battle-Tested Techniques for Tabular Data Modeling

A detailed look at seven battle-tested techniques used by Kaggle Grandmasters to solve large tabular datasets fast with GPU acceleration, from diversified baselines to advanced ensembling and pseudo-labeling.

Microsoft to turn Foxconn site into Fairwater AI data center, touted as world's most powerful

Microsoft unveils plans for a 1.2 million-square-foot Fairwater AI data center in Wisconsin, housing hundreds of thousands of Nvidia GB200 GPUs. The project promises unprecedented AI training power with a closed-loop cooling system and a cost of $3.3 billion.