Optimizing Contextual Speech Recognition with Vector Quantization for Efficient Retrieval

Sources: https://machinelearning.apple.com/research/optimizing-contextual, machinelearning.apple.com

TL;DR

- Contextual biasing for speech recognition often relies on cross-attention between audio and a biasing catalog, which can be prohibitively costly for large catalogs.

- The paper proposes an approximation to cross-attention scoring based on vector quantization to enable compute- and memory-efficient retrieval of bias entries grounded on audio.

- The approach is combined with a retrieval-based biasing workflow and is agnostic to the underlying biasing method (full cross-attention, prompting with LLMs, or both).

- Empirical results show that retrieval-based shortlisting enables leveraging catalogs of thousands to a million entries, delivering up to 71% relative error rate reduction in personal entity recognition and reducing compute time by ~20% and memory usage by 85–95% for large catalogs.

- The work was accepted to the IEEE Spoken Language Technology Workshop (SLT) 2024. IEEE SLT 2024

Context and background

Neural contextual biasing is a growing approach to improve transcription accuracy by injecting contextually relevant information into speech recognition models. Traditional biasing mechanisms typically rely on a cross-attention module that attends to a catalogue of biasing entries in conjunction with spoken audio. While effective, this strategy scales poorly as the biasing catalogue grows, leading to significant computational and memory demands. This limits both the size of the biasing catalogue that can be practically used and the potential accuracy benefits obtainable from larger, more diverse catalogs. The challenge is to balance the desire for expansive, context-aware biasing with the realities of resource-constrained deployment.

What’s new

The authors address the scalability bottleneck by introducing a quantized retrieval approach that serves as an efficient pre-filter for biasing candidates. Specifically, they propose an approximation to cross-attention scoring based on vector quantization. The workflow proceeds as follows: first, a fast, quantized retrieval module grounds biasing entries on the audio signal and shortlists a subset of entries; then, the retrieved subset is used for biasing, in conjunction with the chosen biasing method. A key finding is that this retrieval-based shortlisting can be combined with various biasing strategies. The authors investigate using full cross-attention, prompting with large language models (LLMs), and a combination of the two. This flexibility is important because practitioners can choose the most effective integration depending on their resource constraints and task requirements. Crucially, the approach scales to large bias catalogs, enabling the use of thousands to up to one million bias entries. The study reports substantial improvements in recognition accuracy, particularly for personal entity recognition, while achieving meaningful reductions in compute and memory usage compared with standard dot-product cross-attention on large catalogs.

Why it matters (impact for developers/enterprises)

For developers and enterprises deploying speech recognition systems in real-world settings, the ability to leverage extensive contextual bias catalogs without incurring prohibitive compute and memory costs is transformative. This work demonstrates that you can harness large, diverse bias catalogs to improve transcription quality—especially for personal entities—without sacrificing latency or scalability. From an engineering perspective, the proposed vector-quantization based retrieval module reduces the burden of maintaining massive bias catalogs in memory and helps keep inference times predictable even as catalogs grow. For organizations building voice-enabled applications with strict performance or energy constraints, this approach offers a practical pathway to richer contextualization without backend or hardware overhauls.

Technical details or Implementation (how it works)

- Problem framing: Contextual biasing in ASR traditionally uses cross-attention between the audio representation and a biasing catalogue. The attention scoring step becomes a bottleneck as catalogue size increases.

- Vector quantization-based approximation: The authors introduce an efficient quantized retrieval module that grounds biasing entries on audio. This module shortlists candidates by quantizing representations and performing approximate matching, dramatically reducing the search space before biasing.

- Retrieval-first then bias: After shortlisting, the retrieved entries are fed into the biasing stage. Because the shortlisted set is small, downstream biasing computations—whether using full cross-attention, LLM prompting, or a combination—become tractable for much larger catalogs.

- Agnostic to biasing method: The retrieval step is designed to be agnostic to the choice of biasing mechanism. This enables experimentation with traditional cross-attention, LLM prompting, or both within the same retrieval framework.

- Scale and results: The method supports catalogs up to one million entries. In comparative terms, the approach reduces compute time by about 20% and memory usage by 85–95% relative to standard dot-product cross-attention on large catalogs, while delivering substantial accuracy gains (notably up to 71% relative ER reduction in personal entity recognition).

Key takeaways

- Vector quantization can effectively approximate cross-attention scoring for contextual biasing, enabling scalable retrieval from large catalogs.

- A retrieval-based shortlisting stage reduces computational and memory loads, making it feasible to use bias catalogs with thousands to a million entries.

- The approach is versatile: it can be paired with full cross-attention, LLM prompting, or both, without being tied to a single biasing method.

- Empirical gains include up to 71% relative improvement in personal entity recognition, with ~20% faster compute and up to 85–95% memory reduction for very large catalogs.

| Metric | Benefit |

|---|---|

| Personal entity recognition error rate reduction | Up to 71% relative |

| Compute time reduction | About 20% |

| Memory usage reduction | 85–95% for catalogs up to 1M entries |

| Catalog size supported | Thousands to 1,000,000 entries |

FAQ

-

What problem does this work address?

It tackles the computational and memory bottlenecks of using large contextual bias catalogs in ASR by introducing a vector-quantization based retrieval shortcut before biasing.

-

How does vector quantization help in this context?

It provides an efficient approximation to cross-attention scoring, enabling fast retrieval of relevant bias entries grounded on audio, thus reducing the search space for biasing.

-

Is the retrieval module tied to a specific biasing method?

No. The retrieval approach is agnostic to the biasing method and was evaluated with full cross-attention, LLM prompting, and a combination of the two.

-

Where can I read more about this work?

The study is described in a paper accepted to the IEEE Spoken Language Technology Workshop (SLT) 2024. [IEEE SLT 2024](https://machinelearning.apple.com/research/optimizing-contextual)

References

- https://machinelearning.apple.com/research/optimizing-contextual -IEEE SLT 2024 (as described in the paper linked above)

More news

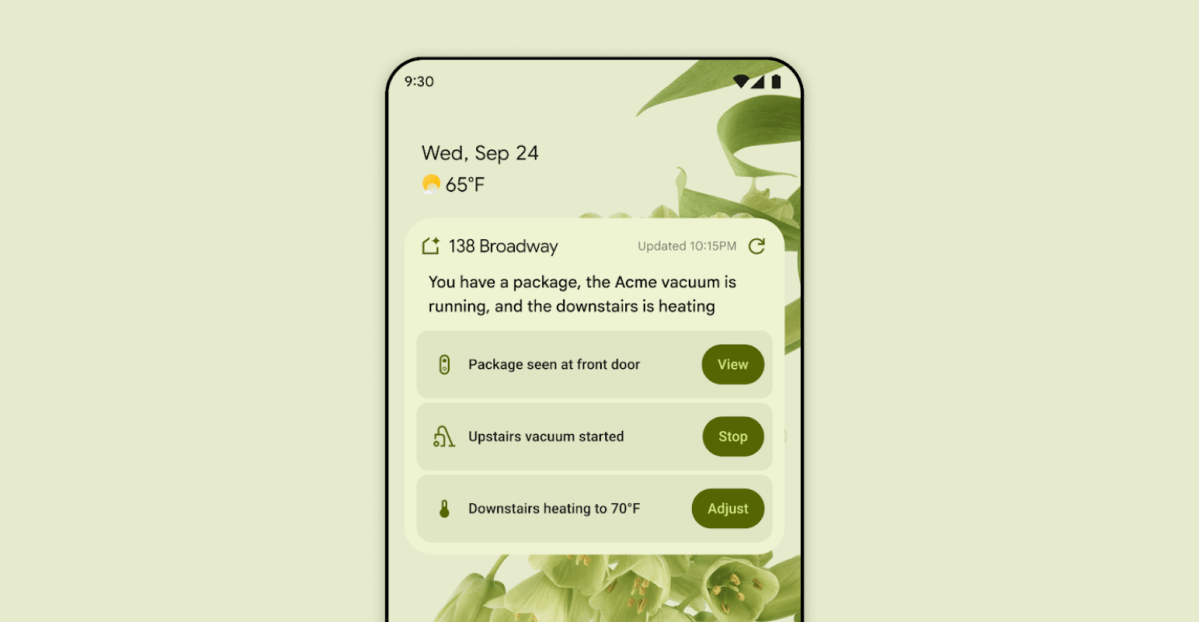

First look at the Google Home app powered by Gemini

The Verge reports Google is updating the Google Home app to bring Gemini features, including an Ask Home search bar, a redesigned UI, and Gemini-driven controls for the home.

Shadow Leak shows how ChatGPT agents can exfiltrate Gmail data via prompt injection

Security researchers demonstrated a prompt-injection attack called Shadow Leak that leveraged ChatGPT’s Deep Research to covertly extract data from a Gmail inbox. OpenAI patched the flaw; the case highlights risks of agentic AI.

Predict Extreme Weather in Minutes Without a Supercomputer: Huge Ensembles (HENS)

NVIDIA and Berkeley Lab unveil Huge Ensembles (HENS), an open-source AI tool that forecasts low-likelihood, high-impact weather events using 27,000 years of data, with ready-to-run options.

Scaleway Joins Hugging Face Inference Providers for Serverless, Low-Latency Inference

Scaleway is now a supported Inference Provider on the Hugging Face Hub, enabling serverless inference directly on model pages with JS and Python SDKs. Access popular open-weight models and enjoy scalable, low-latency AI workflows.

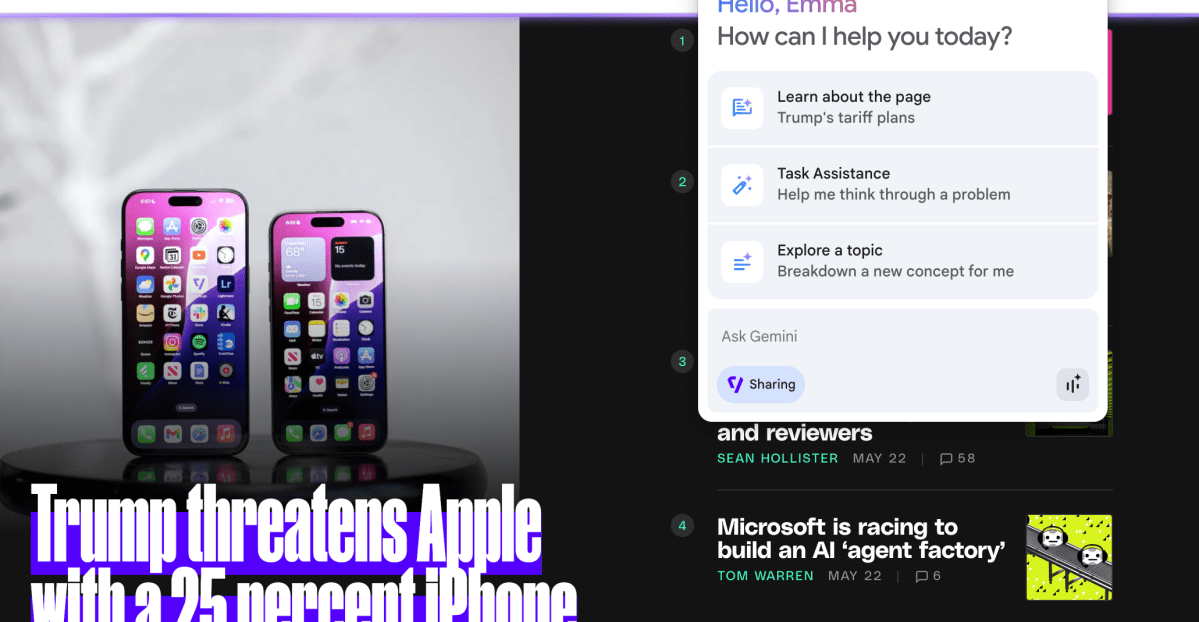

Google expands Gemini in Chrome with cross-platform rollout and no membership fee

Gemini AI in Chrome gains access to tabs, history, and Google properties, rolling out to Mac and Windows in the US without a fee, and enabling task automation and Workspace integrations.

How to Reduce KV Cache Bottlenecks with NVIDIA Dynamo

NVIDIA Dynamo offloads KV Cache from GPU memory to cost-efficient storage, enabling longer context windows, higher concurrency, and lower inference costs for large-scale LLMs and generative AI workloads.