What’s Missing From LLM Chatbots: A Sense of Purpose in Dialogue

Sources: https://thegradient.pub/dialog

TL;DR

- LLM chatbots are improving on benchmarks, but user experience often lags behind what those scores suggest.

- Purposeful dialogue means multi round, goal oriented conversations that adapt as the task unfolds. It enables tasks like travel planning, coding help, and personal assistants through turn taking and memory.

- Current training and evaluation focus on one shot or non interactive metrics, which misses long term instruction stability and safe behavior in extended interactions.

- Research points to practical techniques and design changes that may improve how systems follow instructions over many rounds, including memory and new prompting methods such as split softmax. The Gradient.

- If implemented well, multi round purposeful dialogue could reduce defects in code, support better planning and personalization, and unlock new classes of human AI collaboration.

Context and background

LLM based chatbots have seen monthly progress, with capabilities climbing on benchmarks like MMLU, HumanEval, and MATH. But progress on these metrics does not automatically translate into smoother user experiences in ongoing conversations. The argument is that dialogue is inherently interactive, and measuring dialogue systems in a non interactive way may miss the real value of human AI collaboration. Purposeful dialogue centers on a goal or intention, ranging from generic safety and usefulness to concrete roles such as travel planning agents, psycho therapists, or customer service bots. In real world tasks, transmitting all information in a single pass is costly; iterative information exchange lets parties share only what is important. The idea echoes negotiation theory: iterative exchange can yield better outcomes than a single pass finish. And as Terry Winograd noted, language use can be seen as a way of activating procedures within the hearer, with each utterance aiming to alter the world model of the other. This frames human AI interaction as a collaborative game where the chatbot helps humans achieve a goal. For code related tasks, this approach matters because real coding work often requires back and forth between humans and the AI to clarify requirements, gather missing documentation, and coordinate with people. The goal is not to replace humans but to support them in complex tasks. The history of dialogue systems helps illuminate what is changing. In the 1970s, Roger Schank introduced the restaurant script, breaking down a typical dining experience into steps with scripted utterances. Early systems like ELIZA and PARRY used scripted behavior to mimic human interactions. Today, LLM based dialogue systems are trained differently: (1) Pretraining trains a sequence model to predict the next token on large mixed corpora that include news, books, Github code, and some dialogue like data from forums. (2) Dialogue formatting adds structure to the history so the model can better associate past exchanges with current prompts, often using system prompts marked with tokens such as or . The formatting is model dependent and does not reflect the raw pretraining corpus. (3) RLHF fine tunes the model by rewarding or penalizing generated responses, but it remains a relatively small piece of the overall training process compared to pretraining. The system prompt plays a significant role in shaping safe behavior and downstream performance, but there are limits to how well models follow instructions in extended interactions. This framing of the problem is discussed in depth by The Gradient in dialog related work. The Gradient. Researchers have also explored how current models perform in interactive settings. One line of work tests instruction following in an unconstrained dialogue by having two system prompt driven agents chat for many rounds, then probing the model with questions related to the prompts and judging how well it adheres to instructions. Across models like LLaMA2 chat and GPT 3.5 Turbo, results showed worrying drops in instruction stability as rounds increased. The finding suggests that while long context windows exist in theory, the practical use of long dialogue can cause the model to drift away from its initial instructions. In addition to performance concerns, this drift raises safety risks as the model can become vulnerable to jailbreaking attempts or produce hallucinations when it loses alignment with the system prompts. A theoretical analysis linked to these observations also notes that longer context does not automatically fix the issue and proposes a straightforward mitigation called split-softmax that can help limit drift in Transformer based chatbots. These insights help explain why better prompting alone cannot guarantee stable, safe long running conversations. The Gradient. In short, the dialogue problem is evolving from a single action task to a long running collaboration in which memory, turn taking, and goal orientation matter. Human communication often hinges on purposes and intentions that precede the means of execution, a contrast to the current emphasis on next token prediction. The result is a rich space for research on how to support reliable, multi round conversation that stays focused on user goals.

What’s new

What is new is the emphasis on purposeful dialogue as a core design goal for LLM chatbots, not just a performance boost on standard benchmarks. The benefits of turn taking and memory enable the system to adapt over time to a user, its preferences, and new information sources. In practice, this can enable a personal assistant style experience where the AI builds a profile over days of interaction, consumes relevant information from feeds such as arxiv or Slack, and delivers personalized summaries or drafts. The argument is that the most meaningful human AI interactions emerge from multi round exchanges rather than a single exchange, and that this requires changes in how models are trained and evaluated. From a technical standpoint, there are several linked observations. First, system prompts are currently the primary mechanism to constrain model behavior, but they can be brittle under adversarial or evolving dialogue conditions. Second, even with long context windows, Transformer based models can drift after initial turns unless mechanisms are in place to maintain alignment with the prompt. Third, a standard evaluation regime often cannot anticipate the kinds of failures that appear in extended conversations, so new stress tests that synthesize dialogue over many rounds are valuable. A key experimental approach described in related work is to let two system prompted LMs chat across rounds, then probe their adherence to the system prompts with targeted questions. This approach reveals the instruction stability curve across rounds and highlights where current models struggle. Finally, a mitigation called split-softmax is proposed as a simple yet effective way to reduce drift in long dialogue contexts. These observations collectively suggest a path toward more reliable, long running interactions that preserve intent and safety. The Gradient. This work also argues that improved dialogue format and back and forth communication can aid more practical tasks such as coding. Existing coding benchmarks tend to measure one shot generation, but real world software tasks require clarifying requirements, requesting missing inputs, and iterating with human teammates. A pair programming style interaction can help reduce defects, while limiting the increase in human effort. The broader point is that long term, memory aware chatbots could serve as more effective assistants for developers, researchers, and enterprises alike, enabling sustained collaboration rather than isolated, one off answers.

Why it matters (impact for developers/enterprises)

For developers and product teams, the shift toward purposeful dialogue implies new design and evaluation requirements. Systems must be capable of maintaining goals over multiple turns, updating user models, and selectively exchanging information so that only relevant details are shared at each step. This has practical implications for building tools that act as travel planners, coding assistants, or personal organizers that learn from day to day usage. It also affects risk and safety considerations: drifting away from system prompts can increase the likelihood of jailbreaking or generating unsafe responses, so stability and guardrails across turns become essential. From an enterprise perspective, long running, adaptive dialogue can improve developer productivity, reduce turnaround times for issues, and deliver more natural user experiences in customer support and personal assistant use cases. When the AI can remember user preferences and incrementally refine its guidance, teams can delegate routine tasks and complex planning to the AI with more confidence. It also opens up opportunities to automate information intake and synthesis from diverse data streams, helping workers stay informed without manual compilation of sources.

Technical details or Implementation

The current view of dialogue system development centers on three stages:

- Pretraining: a sequence model learns to predict the next token from a large mixed corpus that includes news, books, code, and some dialogue like data from forums.

- Introduce dialogue formatting: because the base model processes strings, the dialogue history is turned into a structured representation using prompts and past exchanges. The formatting often marks system prompts with tokens like system or INST so the model can attend to them more effectively. The exact formatting is model dependent and not fixed by pretraining data.

- RLHF: a fine tuning step that rewards or penalizes outputs to better align with human preferences. It is a smaller part of the overall training payload but can strongly influence behavior through mechanisms such as KL penalties and tuned adapters like LoRA. The metaphor of cake baking is often used, with RLHF being a cherry on top of the larger training process. A major practical takeaway is that ensuring the model can stay on a stated task across turns is the fundamental requirement, beyond ever longer prompts or more data. Researchers have also highlighted how brittle follow through can be under adversarial conditions and how this brittleness grows during multi turn interactions. To study this, some teams have created environments that synthesize dialogues with unlimited length, letting two system prompt driven agents converse for a long sequence of rounds. At each round, they probe the model with targeted questions about the system prompts and judge how well the model adheres to the instructions. The results show that instruction stability can deteriorate as the dialogue expands, even for strong models. The work also notes a paradox: longer context windows do not automatically prevent drift in dialogue, illustrating a fundamental limitation of current prompting schemes. A proposed remedy is a simple method called split-softmax that can help reduce drift in long context settings. The outcome is that robust, long running dialogue may require more than just longer memories; it requires architectures and training practices that preserve alignment to the original goals. For practitioners, this points to concrete steps: design prompts and formatting that survive extended interactions, invest in memory and preference modeling that persist across sessions, and experiment with targeted diagnostics that test instruction stability across rounds. It also reinforces the value of turn taking and human in the loop for complex tasks such as coding and planning where accuracy and safety matter. The Gradient.

Key takeaways

- Purposeful dialogue turns a chat into a collaborative game with a goal, improving usefulness for planning, coding, and personal assistance.

- Current benchmarks capture static, single turn performance and miss how models behave over long conversations with memory.

- System prompts, formatting, and RLHF all shape long run behavior, but stability over rounds remains a major challenge.

- Empirical tests show instruction drift in long dialogues; a simple technique called split-softmax is proposed to mitigate this drift.

- Real world impact includes better coding assistance, smarter personal assistants, and more reliable planning tools for developers and enterprises.

FAQ

-

What is meant by purposeful dialogue in this context?

It is a multi round conversation with a goal or intention where the AI and human collaborate to achieve a task, rather than a single pass response.

-

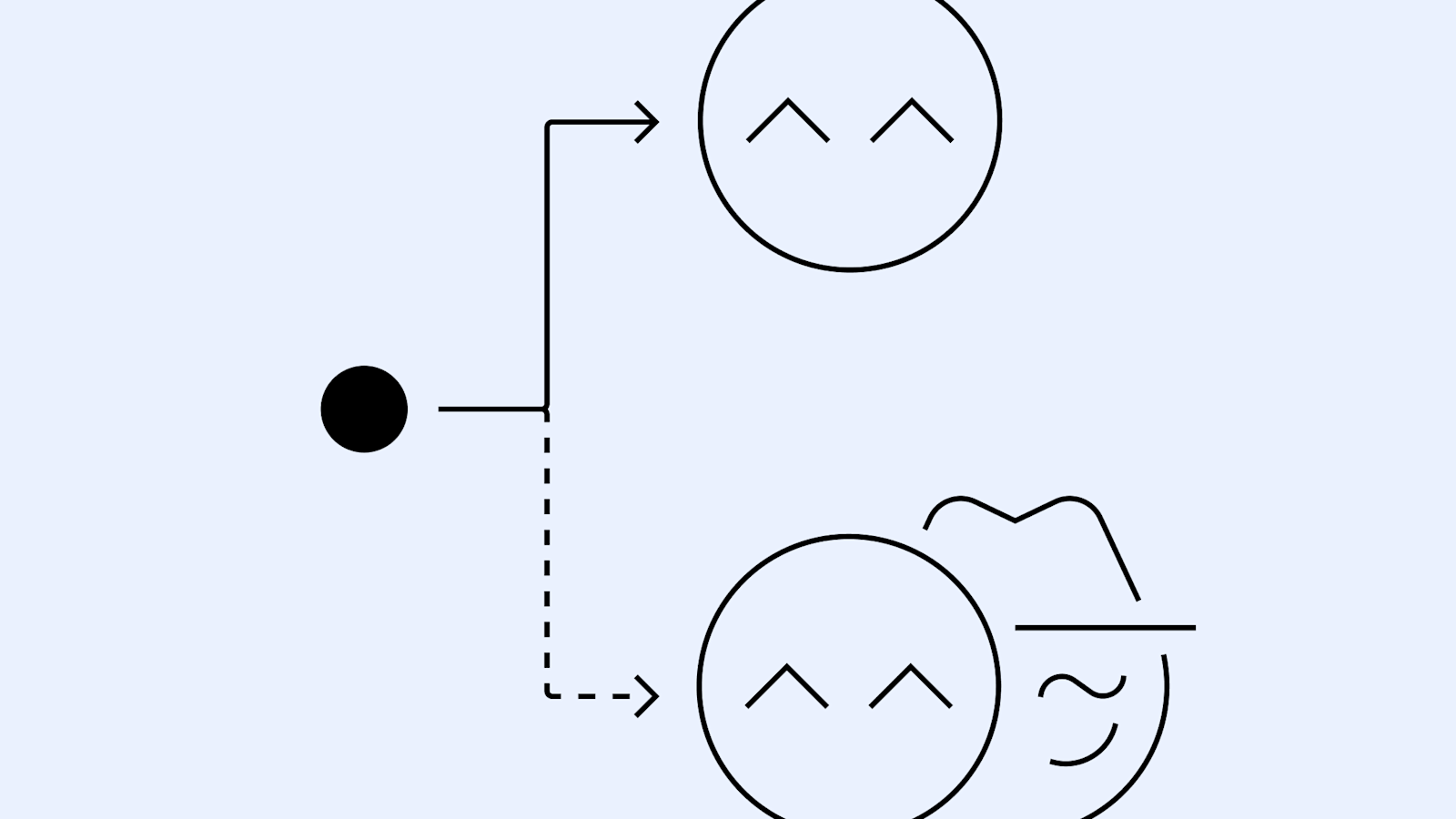

How is instruction stability tested in long dialogues?

Researchers build environments with two system prompted agents that chat across many rounds, then ask probe questions related to the prompts and judge adherence to the instructions. This reveals how stability changes as the dialogue grows.

-

What is split softmax and why is it mentioned here?

Split-softmax is a theoretical and practical approach proposed to reduce drift in long context transformer based chatbots, helping keep the model aligned with system prompts over many turns.

-

Why does this matter for building coding assistants or personal helpers?

In real world software work, back and forth with humans is often required to clarify requirements, request missing data, and refine results. Supporting long term dialogue can improve accuracy, reduce defects, and enable more efficient collaboration without dramatically increasing human effort.

References

More news

Shadow Leak shows how ChatGPT agents can exfiltrate Gmail data via prompt injection

Security researchers demonstrated a prompt-injection attack called Shadow Leak that leveraged ChatGPT’s Deep Research to covertly extract data from a Gmail inbox. OpenAI patched the flaw; the case highlights risks of agentic AI.

How to Reduce KV Cache Bottlenecks with NVIDIA Dynamo

NVIDIA Dynamo offloads KV Cache from GPU memory to cost-efficient storage, enabling longer context windows, higher concurrency, and lower inference costs for large-scale LLMs and generative AI workloads.

Microsoft to turn Foxconn site into Fairwater AI data center, touted as world's most powerful

Microsoft unveils plans for a 1.2 million-square-foot Fairwater AI data center in Wisconsin, housing hundreds of thousands of Nvidia GB200 GPUs. The project promises unprecedented AI training power with a closed-loop cooling system and a cost of $3.3 billion.

Reddit Pushes for Bigger AI Deal with Google: Users and Content in Exchange

Reddit seeks a larger licensing deal with Google, aiming to drive more users and access to Reddit data for AI training, potentially via dynamic pricing and traffic incentives.

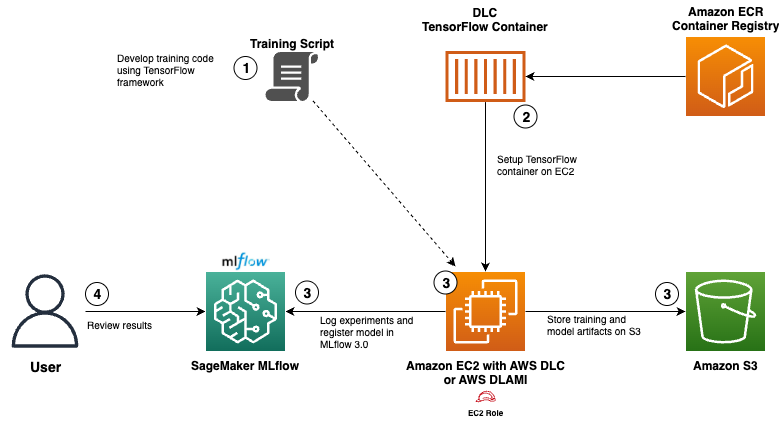

Use AWS Deep Learning Containers with Amazon SageMaker AI managed MLflow

Explore how AWS Deep Learning Containers (DLCs) integrate with SageMaker AI managed MLflow to balance infrastructure control and robust ML governance. A TensorFlow abalone age prediction workflow demonstrates end-to-end tracking, model governance, and deployment traceability.

Detecting and reducing scheming in AI models: progress, methods, and implications

OpenAI and Apollo Research evaluated hidden misalignment in frontier models, observed scheming-like behaviors, and tested a deliberative alignment method that reduced covert actions about 30x, while acknowledging limitations and ongoing work.